Information Security

Modern Malware

14 minute read

Notice a tyop typo? Please submit an issue or open a PR.

Modern Malware

Past Malware

Before 2005, most malware was used for "fun" and/or "fame".

During this era, malware was often designed for the sake of experimentation, and was used to demonstrate some capability - for example, how fast a piece of malware could spread.

Only a few instances of malware in this time period were actually used for denial-of-service attacks and website defacements.

Modern Malware

Since 2005, malware has been used to make compromised computers and networks perform malicious activities in order to elicit financial and even geopolitical gain.

Whereas computers of the past were targets of malware, they are now weapons that malware can control and deploy for profit and gain.

Since modern malware are now used to do real work, they tend to be technically sophisticated and use the latest technologies.

For example, they may utilize popular peer-to-peer protocols and applications to set up communications. They might use cloud computer servers to support their activities. Some malware might use the latest cryptography algorithms to perform authentication and encryption to protect their communications from subversion and analysis.

Botnet

A bot, also called a zombie, is a compromised computer under the control of an attacker.

The bot code present on the compromised system is responsible for communicating with the attacker's server and carrying out malicious activities per the instructions it receives from this server.

Therefore, a botnet is a network of bots controlled by an attacker that is used to perform coordinated malicious activities.

With a network of bots, the aggregated computational power can be very large. An attacker can launch a variety of attacks using such a powerful platform.

Indeed, most Internet-based cyber attacks today are carried out by botnets.

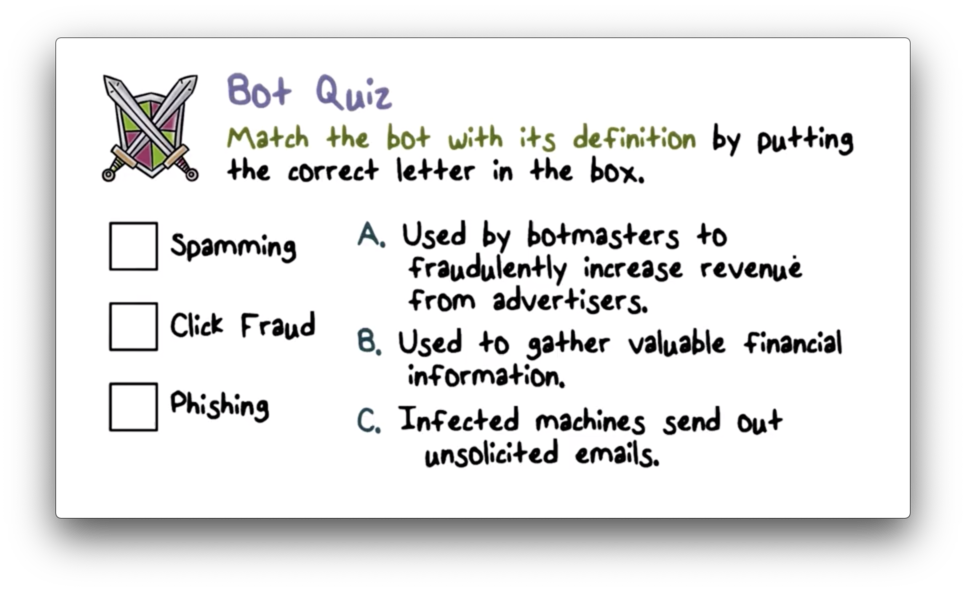

Bot Quiz

Bot Quiz Solution

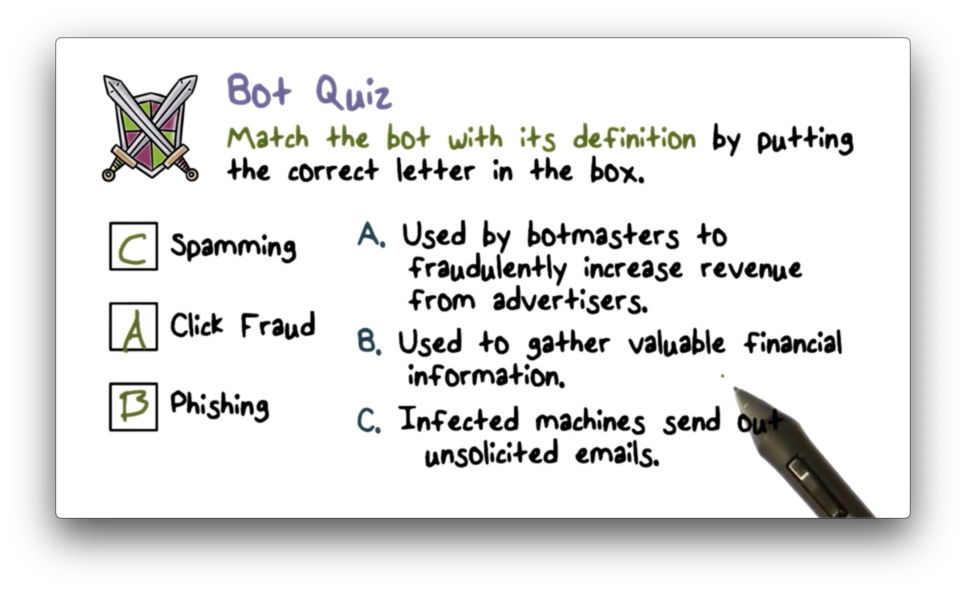

Attacks and Frauds by Botnets

Regardless of the method used, modern botnets usually have one or two goals in mind: illegal profits or political activism. Here are some examples of attacks and frauds by botnets.

DDoS Using Botnets

Let's look at a typical DDoS scenario using botnets.

First, the attacker selects a victim and decides when to attack. Next, the attacker sends a command to all of the bots in the botnet. This command might tell the bots to all send connection requests to the victim at once.

The result is that the victim receives connection requests from many bots at the same time. As a result, the victim is overloaded, and the denial of service is complete.

Amplification Distributed Reflective Attacks

A typical defense against DDoS is to buy more servers or bandwidth. However, DDoS attacks can be amplified to make this type of defense very expensive.

The following is an example of such an amplification attack.

On the Internet, there are many open recursive DNS servers that any Internet-connected machine can query. A typical DNS query asks for the IP address associated with a particular domain name.

Users can also query these servers for the TXT record for a domain. This record often contains a lot of information, and the size of the query response can be more than 1500 bytes.

Attackers will instruct their bots to query these servers for this TXT record. They will spoof (forge) the query such that the source IP address will point to the victim's IP address. As a result, the response will be sent to the victim, not the bot.

The attacker thus amplifies their query traffic - at around 60 bytes per query - into a much larger amount of attack traffic - at 1500+ bytes per response - directed at the victim.

With just a few thousand bots, querying multiple DNS servers, the attacker can send several gigabytes of traffic to the victim.

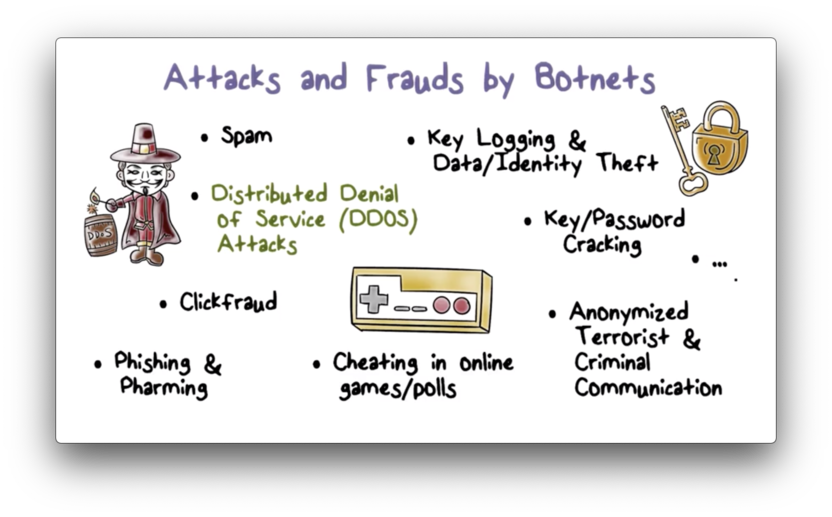

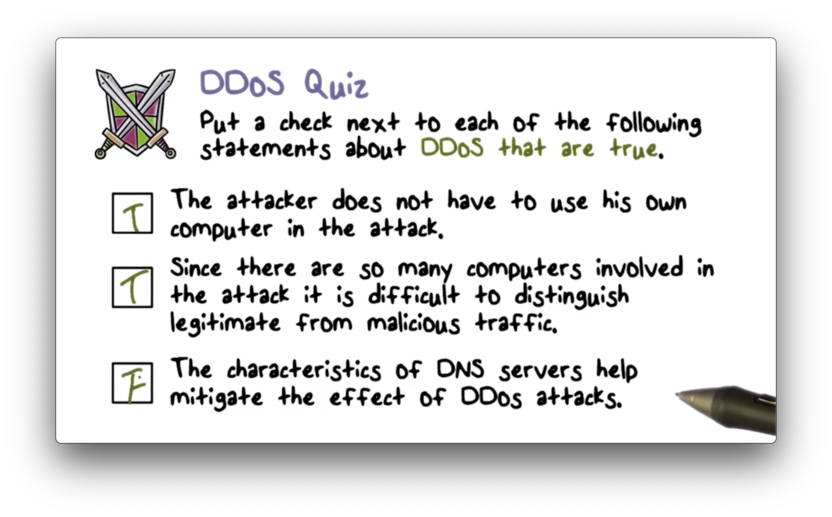

DDoS Quiz

DDoS Quiz Solution

Remember, the characteristics of DNS servers can be used to amplify the effects of DDoS attacks, not mitigate them.

Botnet Command and Control

In order for a botmaster to make use of a botnet, he needs to communicate with the bots. In particular, there needs to be command & control (C&C) from the botmaster to the bots.

For example, a bot should be able to report its current status to the botmaster. A botmaster should be able to direct a bot to download the latest version of the bot code as well as instruct the bot to perform an attack.

Without C&C, a botnet is just a collection of isolated infected machines which the botmaster will not be able to aggregate into a single computational workhorse.

Botnet C&C Problem

Suppose we have downloaded some malware source code from the web, and we've configured it to our liking. We've used some social engineering to start spreading out our compiled malware via email.

As our code spreads, we as botmasters are faced with an important question: How can we identify and contact the infected machines so we can start to use them?

The simplest solution is to have the infected victims contact us.

Botnet C&C: Naive Approach

We may hardcode our email address or our IP address in the malware to give the compromised computers a point of contact upon infection.

This approach is not stealthy. It is a safe bet that security admins will eventually find out that they have bots on their network. When they do, they may be able to obtain your bot code and recover the hardcoded address through malware analysis. With this address, they will be able to identify us.

This approach is also not robust. The single rallying point hardcoded in the malware also represents a single point of failure. For example, if we have hardcoded an email address and the email account has been banned, our command and control center becomes completely unreachable.

Botnet C&C Design

Since having the compromised computers contact the botmaster is neither stealthy nor robust, this strategy is only used by script-kiddies and first time malware authors.

A botmaster will focus on efficiency and reliability when designing bot code. They must be able to coordinate enough bots to perform a specific task, which requires efficient, reliable communication.

In addition, the botmaster will design for stealth and robustness. Stealth makes it hard to detect C&C traffic, while robustness makes it hard to disable or block this traffic.

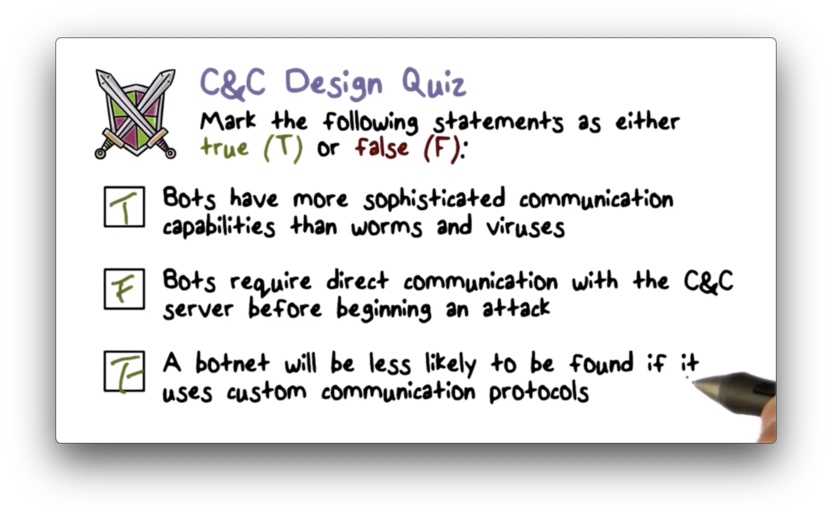

C&C Design Quiz

C&C Design Quiz Solution

The second answer is false. Bot code can have logic bombs or other triggers that enable bots to attack without contacting a C&C server.

The third answer is also false. A botnet is more likely to be found using custom communication protocols, as admins observing the network are more likely to detect strange types of traffic flowing from their system.

DNS Based Botnet C&C

Many botnets use DNS for C&C.

A key advantage is that DNS is used whenever a machine on the Internet needs to talk to another machine because DNS stores the mapping between domain names and IP addresses. DNS is always allowed in a network, so DNS traffic will not stand out.

The botmaster will distribute the malware code, inside of which the domain name of the C&C server is hardcoded. Let's assume that this domain name is hackers.com.

To perform C&C, the bot will ask the DNS server for the IP address for hackers.com. The DNS server will tell the bots what the IP address is, and the bots will use that address for communication.

Botmasters prefer dynamic DNS providers because they allow for frequent changes between domain names and IP addresses. This means that the botmaster can rapidly switch where they host their server, and can quickly change the mapping so that hackers.com points to the new address.

If we can detect that hackers.com is used for botnet C&C, then we can identify any computer that connects to it as a bot.

How can we determine that a domain name is used for C&C?

The way that bots look up a domain will be different from a machine that looks up a web server because of legitimate user activities.

For example, if a domain is being looked up by hundreds of thousands of machines all over the Internet, and yet this domain is unknown to Google search, this is an anomaly.

We can use anomaly detection at the dynamic DNS provider level to examine domain queries in order to identify potential botnet C&C servers.

Once we identify that hackers.com is used for botnet C&C, there are a number of responses available.

The DNS provider can point the entry for hackers.com to the IP address of a sinkhole. The result of this change is that bot traffic will be routed to the sinkhole instead of the C&C server, effectively severing the communication link between the botmaster and the bots.

In addition, security researchers can monitor the sinkhole and, by looking at the IP address of each incoming connection, can determine the distribution of the bots throughout the Internet.

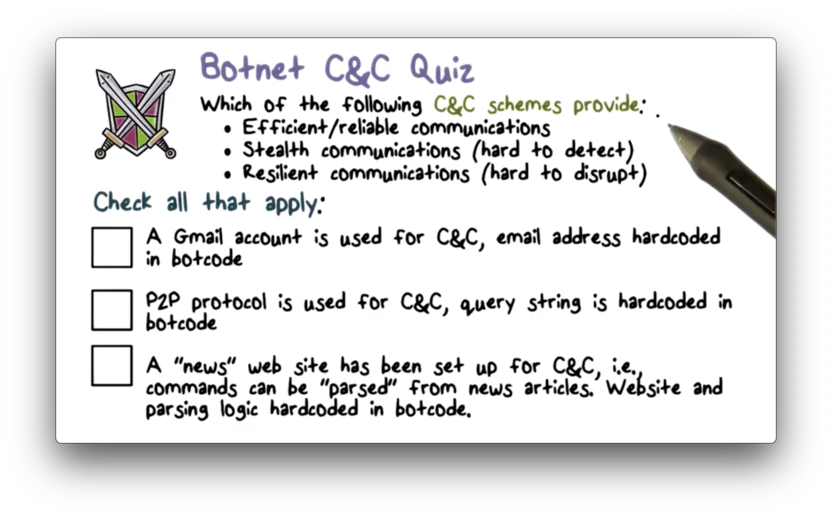

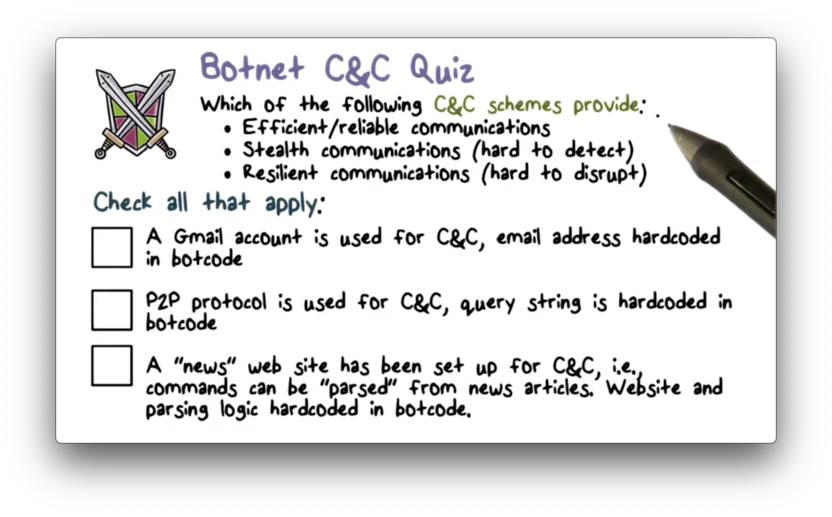

Botnet C&C Quiz

Botnet C&C Quiz Solution

A single gmail account, hardcoded in bot code, is both easy to detect and easy to disrupt.

P2P traffic will easily stand out in an enterprise network where peer-to-peer communications are not typically allowed.

A news site can be hard to detect, because traffic to news websites is common. However, if the site is identified as being malicious, it can easily be blocked.

Advanced Persistent Threat

The latest type of modern malware is the advanced persistent threat (APT). Whereas botnets often have bots all over the Internet, APTs tend to be localized to specific target organizations.

Advanced

APTs can use advanced malware that is specifically crafted for a targeted organization.

Often, the malware used is just a customized version of a common malware. Starting from a common malware provides APT designers with both convenience and deniability; that is, security admins in the target organization will not suspect that a common-looking malware is actually an APT.

The operations carried out by APTs are also advanced.

APTs are not used for common attacks/frauds like spamming, click fraud, phishing. An APT is much more likely to be used for a high value operation, such as stealing the design of a new airplane.

Persistent

Once the malware gets into an organization, it will stay there for a long time, carrying out its attack in a "low and slow" fashion.

For example, rather than sending out the design of the airplane - a large amount of data - onto the Internet at once, the APT can break the data into multiple chunks and then transmit each chunk whenever a user connects to the Internet.

Of course, this approach exfiltrates the designs much more slowly, but can do so without raising any suspicion.

Threat

APTs are a very dangerous threat because they tend to target high value organizations and information.

APT Lifecycle

The attacker starts by identifying the attack target, such as an automobile or aerospace company.

Next, the attacker will research the target, looking for information that can help in the attack.

For example, the attacker can learn about the target organization's network and therefore the vulnerabilities of the organization's network services through network scanning and analysis.

Many companies list their C-level executives on their webpages, and attackers can research these individuals to find their company email addresses, which they can use as a contact point.

Once they have completed their research, the attacker can develop a method to penetrate the organization's network.

For example, the attacker may exploit a zero-day vulnerability in the company's web server.

Alternatively, they may choose to engage in a so-called spear phishing attack. This type of phishing attack is usually directed to a C-level executive within the organization, and is called spear phishing because it is aimed at a single individual that the attacker has spent a lot of time researching.

The exploit succeeds once the APT has gained a foothold within the organization and establishes communication back to the attacker.

Once the communication link has been created, the attacker can push software updates and commands to the APT, such as requesting that it exfiltrate confidential data back to the attacker.

Finally, the APT will try not to raise any suspicion so that it can remain undetected.

For example, it will only exfiltrate data to the attacker when there are other legitimate network connections. In addition, it will keep its footprint as small as possible, only infecting the machines that it needs for its tasks.

APT Characteristics

The most dangerous and advanced APTs are those that use a zero-day exploit or specially crafted malware.

A zero-day attack exploits a previously unknown vulnerability. Since the vulnerability is unknown at the time of the attack, intrusion detection systems will likely not have a signature for the exploit and, even if they did, there is no patch available.

Zero-day exploits can be very successful and very stealthy.

Similarly, a specially crafted malware is usually designed to defeat the signature and behavior monitors in a particular detection system. Like zero-day exploits, this class of malware has a very high chance of succeeding and proceeding undetected.

APTs are characterized not only by their ability to thwart anti-malware software, but also by their ability to employ social engineering strategies to fool even the most sophisticated users.

For example, an APT might start out by passively monitoring email traffic in order to understand who speaks to whom, what certain people talk about, and what type of attachments are sent. With this knowledge, the APT can then successfully forge email from one person to another.

In addition, the APT can conduct man-in-the-middle attacks to make such social engineering strategies very successful.

For example, if an email recipient is uncertain about an email attachment, they may send an email out to the sender asking for clarification. The APT can intercept this message and respond as the "sender", confirming the message and the (malicious) attachment.

APTs are also designed to blend in with normal activities to avoid detection, achieving their goals in a "low and slow" fashion.

For example, if the APT is designed to change the setting of a controller in a nuclear plant, it will not make the changes all at once. Instead, it will make small incremental changes over time to accomplish the eventual attack goal. This was the strategy of the famous Stuxnet malware.

Because APTs are designed to blend in with normal system activities, it can be very hard for anomaly detection systems to catch them.

APTs are often designed to stay in the compromised organization for a long time, always looking for more valuable data to steal.

An APT can carry out many different attacks, on different users, in different parts of the system. At each step, the APT may use different malware code, and it may clean up after itself as it travels throughout its host.

In other words, the footprint of an APT often remains very small and ever-changing.

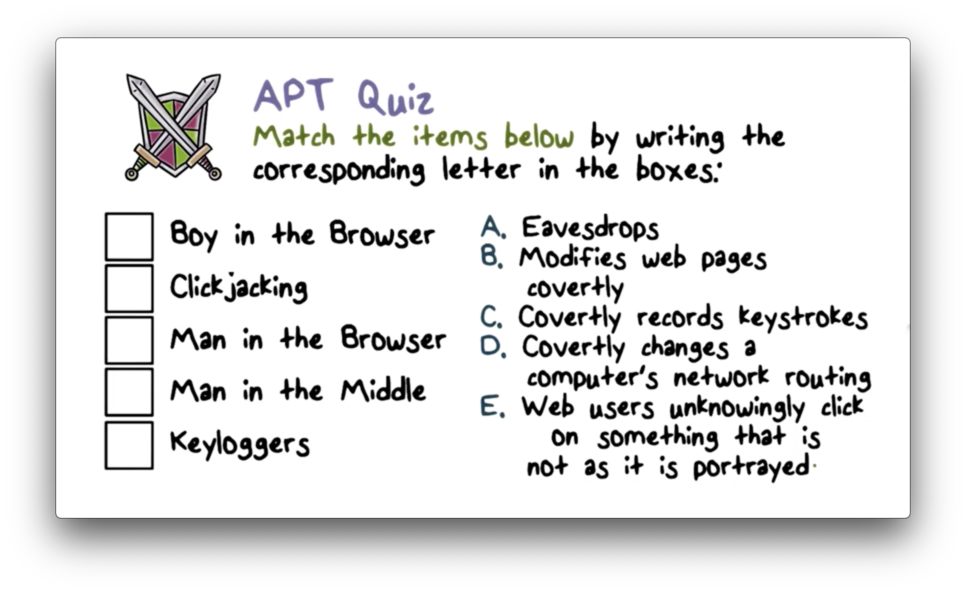

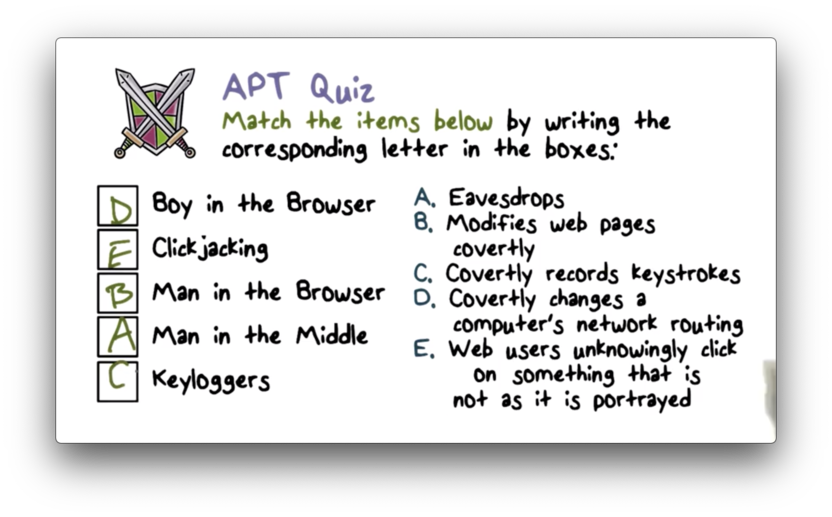

APT Quiz

APT Quiz Solution

APT Example

A CEO gets an email with a PDF attachment containing pie charts of sales activities. When the CEO opens the PDF using the adobe acrobat browser plugin, the crafted attack data embedded in the PDF document causes a zero-day exploit that breaks out of the plugin sandbox and compromises the browser.

The attack code downloads a malicious browser extension - a malware embedded within the browser. From this point on, the extension will infect every attachment the CEO sends via email.

Eventually, the malware gets on the computer of a user who has admin access to the company's server. The malware can now steal the server credentials from the user and thus, the valuable data residing on the server.

This example captures several key characteristics of APTs. First, the users do not realize that their computers and network have been compromised. Second, the APT activity blends in with normal user activity. For example, the APT doesn't send its own emails; instead, it only modifies emails sent by the CEO. Third, the APT takes its time to get to the key individual and steal the server credentials.

Malware Analysis

Malware analysis produces information about malware that can be used for detection and response. There are two typical approaches to malware analysis.

In static analysis, we look at the program or the instruction set of the malware to understand what the malware would do if it was executed. We want to understand the malware's behavior without actually executing it.

There are limitations to static analysis. Some program behaviors that depend on runtime conditions or user input data can not be precisely identified by looking at the source code. Additionally, binary code can be obfuscated.

Another approach is dynamic analysis. Here, we run the malware program and try to analyze its runtime behavior to try to understand what the malware is doing when it is executed.

We can perform dynamic analysis in different levels of granularity. A fine-grained analysis might look at the malware execution on an instruction by instruction basis. For a more coarse-grained approach, we might only look at the system calls that the malware invokes.

Dynamic analysis is not without its limitations. Any particular run of the malware only reveals behaviors of that run. Malware can actively try to resist analysis by delaying execution until a certain command is given or a trigger is tripped. For any run, the conditions may not be right for the malware to exhibit certain behaviors, and so those behaviors are not revealed.

Because of the limitations of each type of analysis, a typical malware analysis system will employ both static and dynamic analysis.

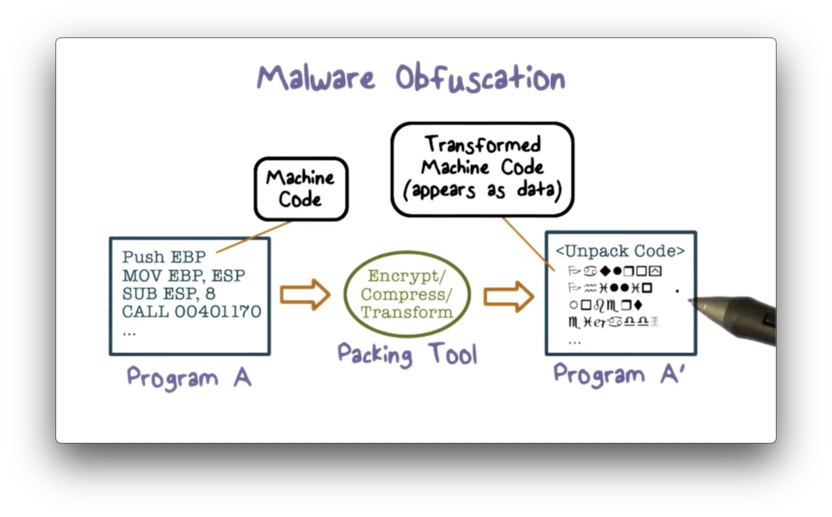

Malware Obfuscation

Packing refers to the process of compressing and encrypting part of an executable program. The result of packing is that part of the executable becomes data instead of instructions. To execute the packed instructions, the packing tool must include code in the packed executable that unpacks these instructions.

The packing program will encrypt the malware using a randomly generated key, which means that each subsequent packed malware will look completely different from the last. Consequently, a signature-based approach is not effective in detecting packed malware.

Can we use the unpacking instructions as a signature to detect malware? Unfortunately not. Malware is not the only application that performs packing/unpacking. Legitimate programs may use packing to hide certain logic and/or data, often for intellectual property reasons such as copyright protection.

Unpacking

Most modern malware uses packing, and there are many packers available. Furthermore, there are hundreds of thousands of packed malware samples released to the Internet every day by attackers.

We cannot manually analyze all of the malware samples. Instead, we need an automatic approach to unpack and analyze malware. Such an approach has to be universally applicable to all of the malware samples.

We can run static analysis over the packed malware to get a set of instructions in the packed program. We can then run the malware and use dynamic analysis to identify instructions that are not present in the statically identified set of instructions. These instructions must have been unpacked just before execution.

Once we identify the unpacked code, we can then apply other anti-malware techniques such as signature scanning to identify the malware logic. Since the unpacked malware code looks the same across malware instances, we can still use a signature-based approach to identify these infections.

Malware Analysis Quiz

Malware Analysis Quiz Solution

OMSCS Notes is made with in NYC by Matt Schlenker.

Copyright © 2019-2023. All rights reserved.

privacy policy